The topics of methodological individualism and microfoundationalism unavoidably cross with the idea of reductionism — the notion that higher level entities and structures need somehow to be “reduced” to facts or properties having to do with lower level structures. In the social sciences, this amounts to something along these lines: the properties and dynamics of social entities need to be explained by the properties and interactions of the individuals who constitute them. Social facts need to reduce to a set of individual-level facts and laws. Similar positions arise in psychology (“psychological properties and dynamics need to reduce to facts about the activities and properties of the central nervous system”) and biology (“complex biological systems like genes and cells need to reduce to the biochemistry of the interacting systems of molecules that make them up”).

Reductionism has a bad flavor within much of philosophy, but it is worth dwelling on the concept a bit more fully.

Why would the strategy of reduction be appealing within a scientific research tradition? Here is one reason: there is evident explanatory gain that results from showing how the complex properties and functionings of a higher-level entity are the result of the properties and interactions of its lower level constituents. This kind of demonstration serves to explain the upper level system’s properties in terms of the entities that make it up. This is the rationale for Peter Hedstrom’s metaphor of “dissecting the social” (Dissecting the Social: On the Principles of Analytical Sociology); in his words,

To dissect, as the term is used here, is to decompose a complex totality into its constituent entities and activities and then to bring into focus what is believed to be its most essential elements. (kl 76)

Aggregate or macro-level patterns usually say surprisingly little about why we observe particular aggregate patterns, and our explanations must therefore focus on the micro-level processes that brought them about. (kl 141)

The explanatory strategy illustrated by Thomas Schelling in Micromotives and Macrobehavior proceeds in a similar fashion. Schelling wants to show how a complex social phenomenon (say, residential segregation) can be the result of a set of preferences and beliefs of the independent individuals who make up the relevant population. And this is also the approach that is taken by researchers who develop agent-based models (link).

Why is the appeal to reduction sometimes frustrating to other scientists and philosophers? Because it often seems to be a way of changing the subject away from our original scientific interest. We started out, let’s say, with an interest in motion perception, looking at the perceiver as an information-processing system, and the reductionist keeps insisting that we turn our attention to the organization of a set of nerve cells. But we weren’t interested in nerve cells; we were interested in the computational systems associated with motion perception.

Another reason to be frustrated with “methodological reductionism” is the conviction that mid-level entities have stable properties of their own. So it isn’t necessary to reduce those properties to their underlying constituents; rather, we can investigate those properties in their own terms, and then make use of this knowledge to explain other things at that level.

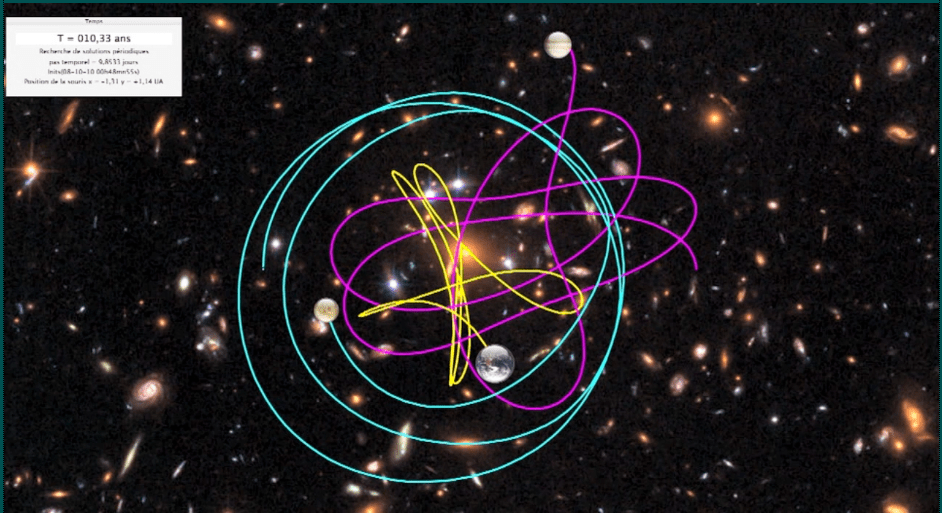

Finally, it is often the case that it is simply impossible to reconstruct with any useful precision the micro-level processes that give rise to a given higher-level structure. The mathematical properties of complex systems come in here: even relatively simple physical systems, governed by deterministic mechanical laws, exhibit behavior that cannot be calculated on the basis of information about the starting conditions of the system. A solar system with a massive star at the center and a handful of relatively low-mass planets produces a regular set of elliptical orbits. But a three-body gravitational system creates computational challenges that make it impossible to predict the future state of the system; even small errors of measurement or intruding forces can significantly shift the evolution of the system. (Here is an interesting animation of a three-body gravitational system; the image at the top is a screenshot.)

We might capture part of this set of ideas by noting that we can distinguish broadly between vertical and lateral explanatory strategies. Reduction is a vertical strategy. The discovery of the causal powers of a mid-level entity and use of those properties to explain the behavior of other mid-level entities and processes is a lateral or horizontal strategy. It remains within a given level of structure rather than moving up and down over two or more levels.

William Wimsatt is a philosopher of biology whose writings about reduction have illuminated the topic significantly. His article “Reductionism and its heuristics: Making methodological reductionism honest” is particularly useful (link). Wimsatt distinguishes among three varieties of reductionism in the philosophy of science: inter-level reductive explanations, same-level reductive theory succession, and eliminative reduction (448). He finds that eliminative reduction is a non-starter; virtually no scientists see value in attempting to eliminate references to the higher-level domain in favor of a lower-level domain. Inter-level reduction is essentially what was described above. And theory-succession reduction is a mapping from one theory to the next of the ontologies that they depend upon. Here is his description of “successional reduction”:

Successional reductions commonly relate theories or models of entities which are either at the same compositional level or they relate theories that aren’t level-specific…. They are relationships between theoretical structures where one theory or model is transformed into another … to localize similarities and differences between them. (449)

I suppose an example of this kind of reduction is the mapping of the quantum theory of the atom onto the classical theory of the atom.

Here is Wimsatt’s description of inter-level reductive explanation:

Inter-level reductions explain phenomena (entities, relations, causal regularities) at one level via operations of often qualitatively different mechanisms at lower levels. (450)

Here is an example he offers of the “reduction” of Mendel’s factors in biology:

Mendel’s factors are successively localized through mechanistic accounts (1) on chromosomes by the Boveri–Sutton hypothesis (Darden, 1991), (2) relative to other genes in the chromosomes by linkage mapping (Wimsatt, 1992), (3) to bands in the physical chromosomes by deletion mapping (Carlson, 1967), and finally (4) to specific sites in chromosomal DNA thru various methods using PCR (polymerase chain reaction) to amplify the number of copies of targeted segments of DNA to identify and localize them (Waters, 1994).

What I find useful about Wimsatt’s approach is the fact that he succeeds in de-dramatizing this issue. He puts aside the comprehensive and general claims that have sometimes been made on behalf of “methodological reductionism” in the past, and considers specific instances in biology where scientists have found it very useful to investigate the vertical relations that exist between higher-level and lower-level structures. This takes reductionism out of the domain of a general philosophical principle and into that of a particular research heuristic.

Dan – Once again, thanks for this discussion; I was unfamiliar with Wimsatt’s paper. I hope all is well. Jim

PS: Have you seen the symposium on GMO and foods in the most recent Boston Review? I recall you saying that you and your daughter are at odds on the matter. It is an interesting collection of arguments on the relevant matters.

LikeLike

Thanks, Jim, Yes, I’ve seen the BR symposium. As always, a great read! Hope to see you in Ann Arbor one day! Dan

LikeLike