Complex socio-technical systems fail; that is, accidents occur. And it is enormously important for engineers and policy makers to have a better way of thinking about accidents than is the current protocol following an air crash, a chemical plant fire, or the release of a contaminated drug. We need to understand better what the systems and organizational causes of an accident are; even more importantly, we need to have a basis for improving the safe functioning of complex socio-technical systems by identifying better processes and better warning indicators of impending failure.

A long-term leader in the field of systems-safety thinking is Nancy Leveson, a professor of aeronautics and astronautics at MIT and the author of Safeware: System Safety and Computers (1995) and Engineering a Safer World: Systems Thinking Applied to Safety (2012). Leveson has been a particular advocate for two insights: looking at safety as a systems characteristic, and looking for the organizational and social components of safety and accidents as well as the technical event histories that are more often the focus of accident analysis. Her approach to safety and accidents involves looking at a technology system in terms of the set of controls and constraints that have been designed into the process to prevent accidents. “Accidents are seen as resulting from inadequate control or enforcement of constraints on safety-related behavior at each level of the system development and system operations control structures.” (25)

The abstract for her essay “A New Accident Model for Engineering Safety” (link) captures both points.

New technology is making fundamental changes in the etiology of accidents and is creating a need for changes in the explanatory mechanisms used. We need better and less subjective understanding of why accidents occur and how to prevent future ones. The most effective models will go beyond assigning blame and instead help engineers to learn as much as possible about all the factors involved, including those related to social and organizational structures. This paper presents a new accident model founded on basic systems theory concepts. The use of such a model provides a theoretical foundation for the introduction of unique new types of accident analysis, hazard analysis, accident prevention strategies including new approaches to designing for safety, risk assessment techniques, and approaches to designing performance monitoring and safety metrics.

The accident model she describes in this article and elsewhere is STAMP (Systems-Theoretic Accident Model and Processes). Here is a short description of the approach.

In STAMP, systems are viewed as interrelated components that are kept in a state of dynamic equilibrium by feedback loops of information and control. A system in this conceptualization is not a static design—it is a dynamic process that is continually adapting to achieve its ends and to react to changes in itself and its environment. The original design must not only enforce appropriate constraints on behavior to ensure safe operation, but the system must continue to operate safely as changes occur. The process leading up to an accident (loss event) can be described in terms of an adaptive feedback function that fails to maintain safety as performance changes over time to meet a complex set of goals and values…. The basic concepts in STAMP are constraints, control loops and process models, and levels of control. (12)

The other point of emphasis in Leveson’s treatment of safety is her consistent effort to include the social and organizational forms of control that are a part of the safe functioning of a complex technological system.

Event-based models are poor at representing systemic accident factors such as structural deficiencies in the organization, management deficiencies, and flaws in the safety culture of the company or industry. An accident model should encourage a broad view of accident mechanisms that expands the investigation from beyond the proximate events. (6)

She treats the organizational backdrop of the technology process in question as being a crucial component of the safe functioning of the process.

Social and organizational factors, such as structural deficiencies in the organization, flaws in the safety culture, and inadequate management decision making and control are directly represented in the model and treated as complex processes rather than simply modeling their reflection in an event chain. (26)

And she treats organizational features as another form of control system (along the lines of Jay Forrester’s early definitions of systems in Industrial Dynamics.

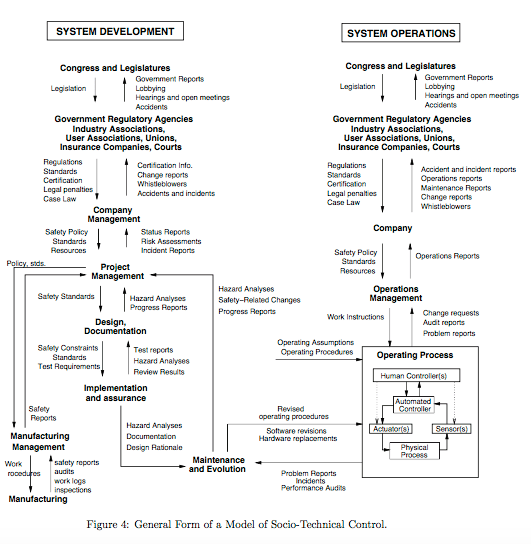

Modeling complex organizations or industries using system theory involves dividing them into hierarchical levels with control processes operating at the interfaces between levels (Rasmussen, 1997). Figure 4 shows a generic socio-technical control model. Each system, of course, must be modeled to reflect its specific features, but all will have a structure that is a variant on this one. (17)

Here is figure 4:

The approach embodied in the STAMP framework is that safety is a systems effect, dynamically influenced by the control systems embodied in the total process in question.

In STAMP, systems are viewed as interrelated components that are kept in a state of dynamic equilibrium by feedback loops of information and control. A system in this conceptualization is not a static design—it is a dynamic process that is continually adapting to achieve its ends and to react to changes in itself and its environment. The original design must not only enforce appropriate constraints on behavior to ensure safe operation, but the system must continue to operate safely as changes occur. The process leading up to an accident (loss event) can be described in terms of an adaptive feedback function that fails to maintain safety as performance changes over time to meet a complex set of goals and values. (12)

And:

In systems theory, systems are viewed as hierarchical structures where each level imposes constraints on the activity of the level beneath it—that is, constraints or lack of constraints at a higher level allow or control lower-level behavior (Checkland, 1981). Control laws are constraints on the relationships between the values of system variables. Safety-related control laws or constraints therefore specify those relationships between system variables that constitute the nonhazardous system states, for example, the power must never be on when the access door is open. The control processes (including the physical design) that enforce these constraints will limit system behavior to safe changes and adaptations. (17)

Leveson’s understanding of systems theory brings along with it a strong conception of “emergence”. She argues that higher levels of systems possess properties that cannot be reduced to the properties of the components, and that safety is one such property:

In systems theory, complex systems are modeled as a hierarchy of levels of organization, each more complex than the one below, where a level is characterized by having emergent or irreducible properties. Hierarchy theory deals with the fundamental differences between one level of complexity and another. Its ultimate aim is to explain the relationships between different levels: what generates the levels, what separates them, and what links them. Emergent properties associated with a set of components at one level in a hierarchy are related to constraints upon the degree of freedom of those components. (11)

But her understanding of “irreducible” seems to be different from that commonly used in the philosophy of science. She does in fact believe that these higher-level properties can be explained by the system of properties at the lower levels — for example, in this passage she asks “… what generates the levels” and how the emergent properties are “related to constraints” imposed on the lower levels. In other words, her position seems to be similar to that advanced by Dave Elder-Vass (link): emergent properties are properties at a higher level that are not possessed by the components, but which depend upon the interactions and composition of the lower-level components.

The domain of safety engineering and accident analysis seems like a particularly suitable place for Bayesian analysis. It seems unavoidable that accident analysis involves both frequency-based probabilities (e.g. the frequency of pump failure) and expert-based estimates of the likelihood of a particular kind of failure (e.g. the likelihood that a train operator will slacken attention to track warnings in response to company pressure on timetable). Bayesian techniques are suitable for the task of combining these various kinds of estimates of risk into a unified calculation.

The topic of safety and accidents is particularly relevant to Understanding Society because it expresses very clearly the causal complexity of the social world in which we live. And rather than simply ignoring that complexity, the systematic study of accidents gives us an avenue for arriving at better ways of representing, modeling, and intervening in parts of that complex world.