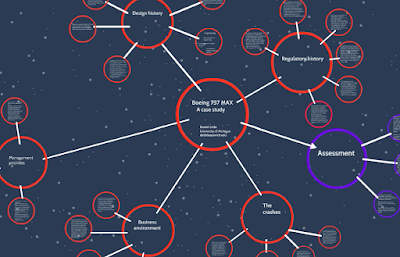

The topic of the organizational causes of technology failure comes up frequently in Understanding Society. The tragic crashes of two Boeing 737 MAX aircraft in the past year present an important case to study. Is this an instance of pilot error (as has occasionally been suggested)? Is it a case of engineering and design failures? Or are there important corporate and regulatory failures that created the environment in which the accidents occurred, as the public record seems to suggest?

The formal accident investigations are not yet complete, and the FAA and other air safety agencies around the world have not yet approved the aircraft for flight following the suspension of certification following the second crash. There will certainly be a detailed and expert case study of this case at some point in the future, and I will be eager to read the resulting book. In the meantime, though, it is useful to bring the perspectives of Charles Perrow, Diane Vaughan, and Andrew Hopkins to bear on what we can learn about this case from the public media sources that are available. The preliminary sketch of a case study offered below is a first effort and is intended simply to help us learn more about the social and organizational processes that govern the complex technologies upon which we depend. Many of the dysfunctions identified in the safety literature appear to have had a role in this disaster.

I have made every effort to offer an accurate summary based on publicly available sources, but readers should bear in mind that it is a preliminary effort.

The key conclusions I’ve been led to include these:

The updated flight control system of the aircraft (MCAS) created the conditions for crashes in rare flight conditions and instrument failures.

- Faults in the AOA sensor and the MCAS flight control system persisted through the design process

- pilot training and information about changes in the flight control system were likely inadequate to permit pilots to override the control system when necessary

There were fairly clear signs of organizational dysfunction in the development and design process for the aircraft:

- Disempowered mid-level experts (engineers, designers, software experts)

- Inadequate organizational embodiment of safety oversight

- Business priorities placing cost savings, timeliness, profits over safety

- Executives with divided incentives

- Breakdown of internal management controls leading to faulty manufacturing processes

Cost-containment and speed trumped safety. It is hard to avoid the conclusion that the corporation put cost-cutting and speed ahead of the professional advice and judgment of the engineers. Management pushed the design and certification process aggressively, leading to implementation of a control system that could fail in foreseeable flight conditions.

The regulatory system seems to have been at fault as well, with the FAA taking a deferential attitude towards the company’s assertions of expertise throughout the certification process. The regulatory process was “outsourced” to a company that already has inordinate political clout in Congress and the agencies.

- Inadequate government regulation

- FAA lacked direct expertise and oversight sufficient to detect design failures.

- Too much influence by the company over regulators and legislators

Here is a video presentation of the case as I currently understand it (link).

See also this earlier discussion of regulatory failure in the 737 MAX case (link). Here are several experts on the topic of organizational failure whose work is especially relevant to the current case:

Charles Perrow, Normal Accidents: Living with High-Risk Technologies

Diane Vaughan, The Challenger Launch Decision: Risky Technology, Culture, and Deviance at NASA, Enlarged Edition

Andrew Hopkins, Lessons from Longford: The ESSO Gas Plant Explosion